Presentation

Interactive In-Situ Visualization of Large Distributed Volume Data

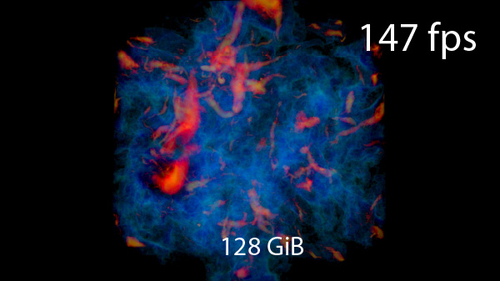

DescriptionLarge distributed volume data are routinely produced in numerical simulations and experiments. In-situ visualization, the visualization of simulation or experiment data as it is generated, enables simulation steering and experiment control, which helps scientists gain an intuitive understanding of the studied phenomena. Such data exploration requires interactive visualization with smooth viewpoint changes and zooming to convey depth perception and spatial understanding. As data sizes increase, this becomes increasingly challenging.

This thesis presents an end-to-end solution for interactive in-situ visualization on distributed computers based on novel extensions to the Volumetric Depth Image (VDI) representation. VDIs are view-dependent, compact representations of volume data that can be rendered faster than the original data.

We propose the first algorithm to generate VDIs on distributed 3D data, using sort-last parallel compositing to scale to large data sizes. Scalability is achieved by a novel compact in-memory representation of VDIs that exploits sparsity and optimizes performance. We also propose a low-latency architecture for sharing data and hardware resources with a running simulation. The resulting VDI is streamed for remote interactive visualization.

We provide a novel raycasting algorithm for rendering streamed VDIs, significantly outperforming existing solutions. We exploit properties of perspective projection to minimize calculations in the GPU kernel and leverage spatial smoothness in the data to minimize memory accesses.

The quality and performance of the approach are evaluated on multiple datasets, showing that the approach outperforms state-of-the-art techniques for visualizing large distributed volume data. The contributions are implemented as extensions to established open-source tools.

This thesis presents an end-to-end solution for interactive in-situ visualization on distributed computers based on novel extensions to the Volumetric Depth Image (VDI) representation. VDIs are view-dependent, compact representations of volume data that can be rendered faster than the original data.

We propose the first algorithm to generate VDIs on distributed 3D data, using sort-last parallel compositing to scale to large data sizes. Scalability is achieved by a novel compact in-memory representation of VDIs that exploits sparsity and optimizes performance. We also propose a low-latency architecture for sharing data and hardware resources with a running simulation. The resulting VDI is streamed for remote interactive visualization.

We provide a novel raycasting algorithm for rendering streamed VDIs, significantly outperforming existing solutions. We exploit properties of perspective projection to minimize calculations in the GPU kernel and leverage spatial smoothness in the data to minimize memory accesses.

The quality and performance of the approach are evaluated on multiple datasets, showing that the approach outperforms state-of-the-art techniques for visualizing large distributed volume data. The contributions are implemented as extensions to established open-source tools.